7 AI tools your localization team needs to master in the GenAI era

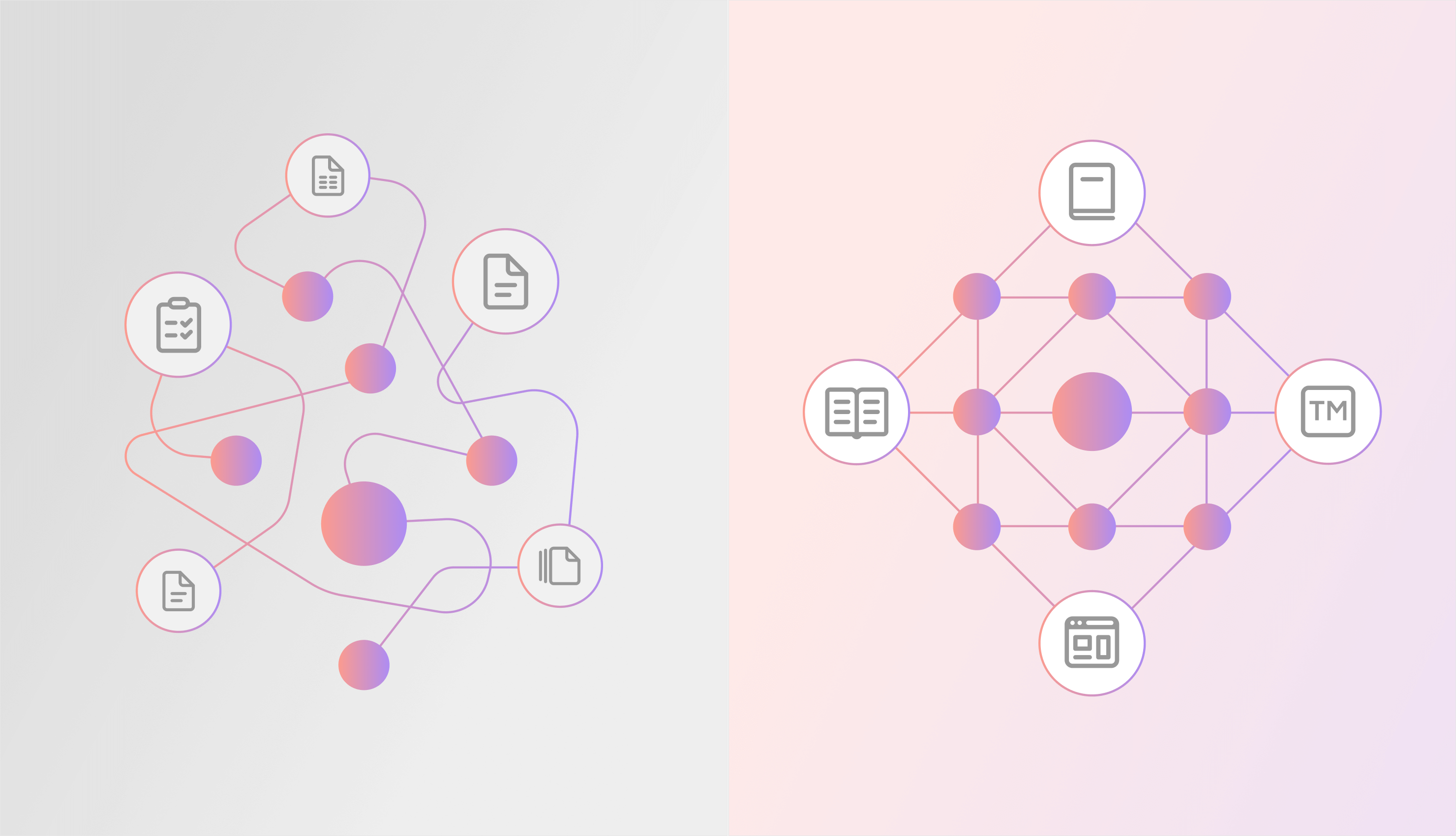

GenAI isn’t just changing how translations are produced. It’s reshaping the entire localization workflow. Most teams didn’t build their workflows for this shift. They’re still relying on a mix of spreadsheets, standalone MT engines, and manual file handoffs. It’s familiar, and for a while, it worked. But it was never designed for the scale teams are dealing with now.

Updated on May 5, 2026·Mia Comic AI translation with glossary support: Deterministic terminology for LLMs

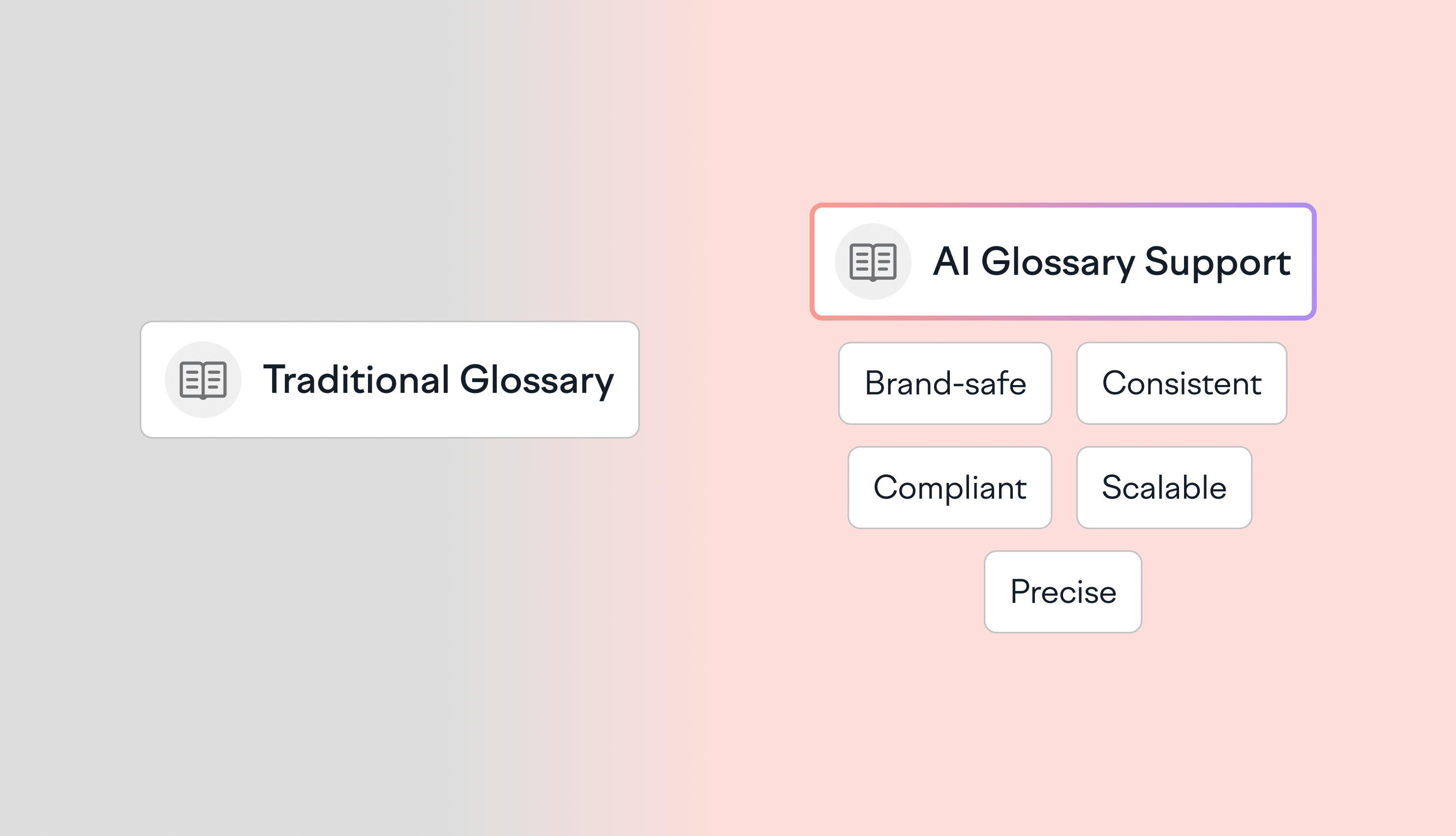

LLMs are fluent in generating outputs, but they're not faithful to your brand. When a general-purpose LLM translates your product UI into 15 languages, it doesn't know which terms are trademarked, which phrases have legal restrictions, or which features are deprecated. It makes a statistical guess. At scale, this guesswork can lead to major inconsistencies, compliance risks, and a post-editing overload. The challenge: AI models’ probabilistic outputs are not ideal

Updated on April 26, 2026·Shreelekha Singh The 5 best tools for AI translation post-editing (MTPE)

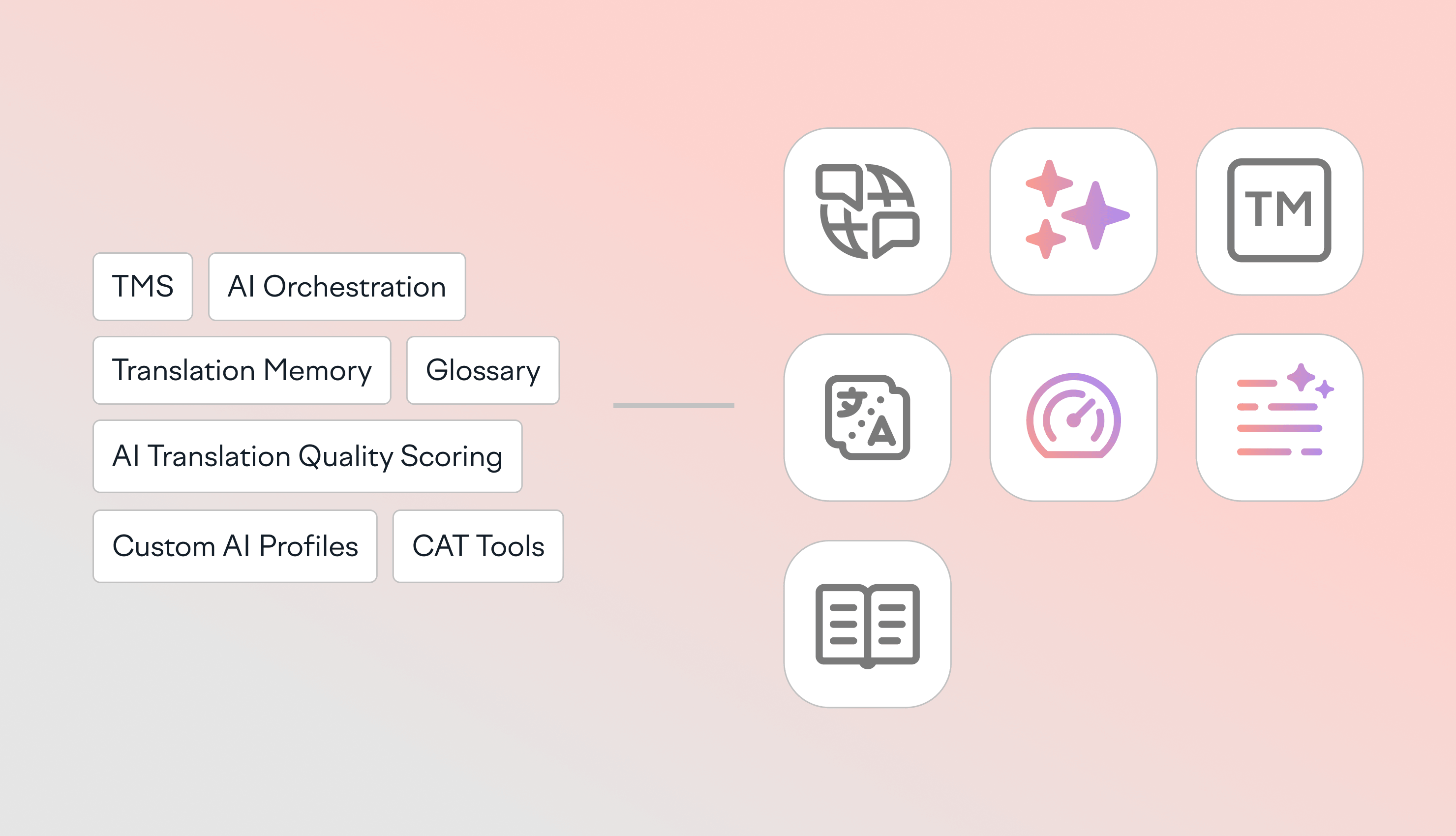

AI translation post-editing tools promise 40-60% cost savings. In practice, you only get those savings when AI output is controlled. This means your terminology is enforced, risky segments are flagged before they go live, and linguists only touch what truly needs human attention. That’s why the best MTPE tools today aren’t standalone CAT tools or MT engines. They’re actually translation management systems (TMS) that orchestrate AI, terminology, and quality assurance in one place.

Updated on March 30, 2026·Mia Comic AI vs human translation cost: How to cut localization costs by up to 97%

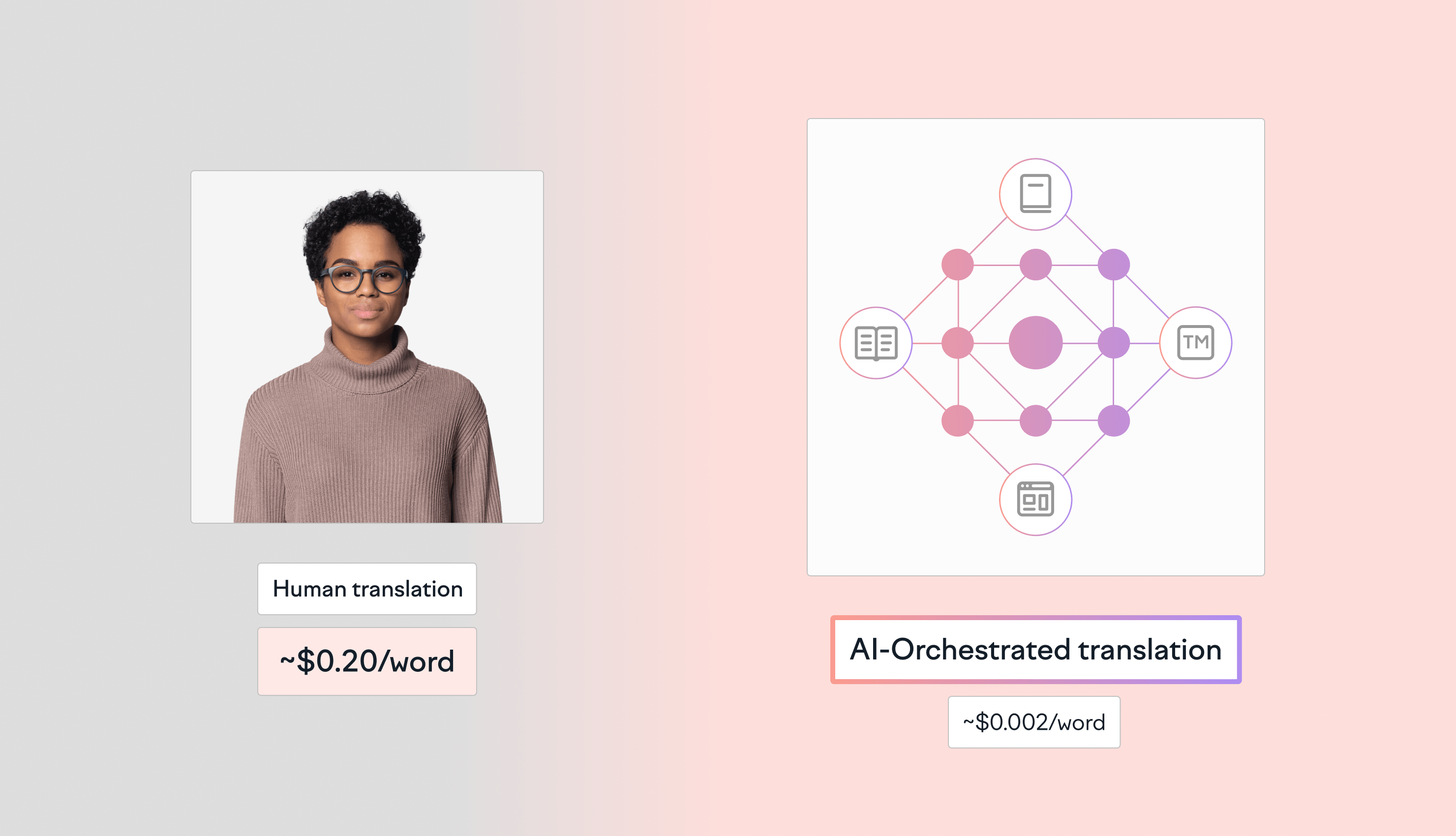

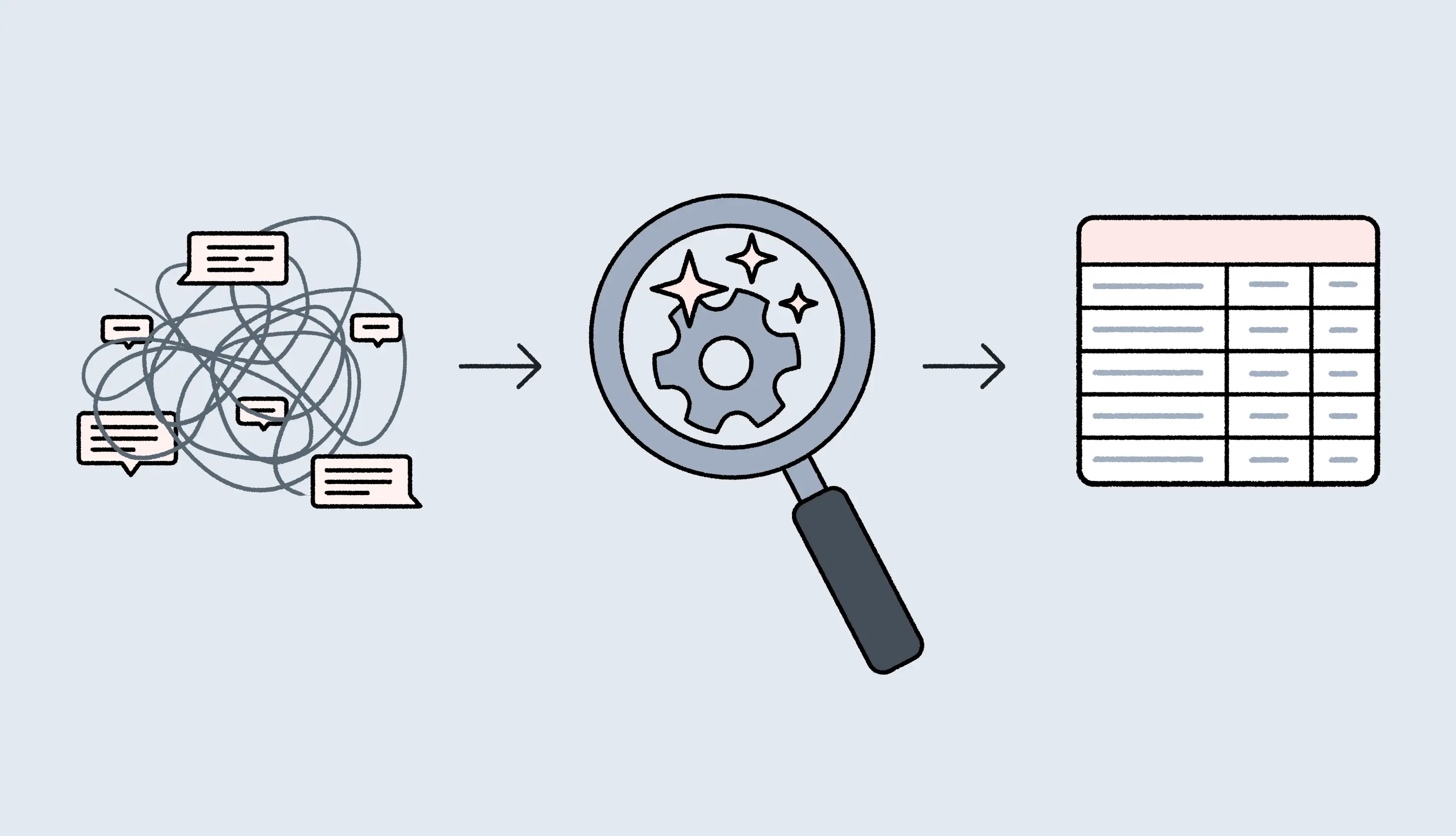

As of 2026, the Total Cost of Ownership (TCO) for enterprise localization has shifted from a per-word human model (~$0.20/word) to an orchestrated AI model (~$0.002/word). This 100x efficiency is driven by AI Orchestration, which automates context retrieval (RAG) and eliminates manual project management overhead. This shift comes from AI orchestration. These systems combine large language models with retrieval-augmented context, terminology databases, translation memory, and automated q

Updated on March 26, 2026·Mia Comic The fine-tuning trap in AI translation

Fine-tuning sounds like the clean way to improve AI translation quality. You train the model on your content with the expectation it’ll learn your style. In practice, generic fine-tuning is where enterprise translation programs get stuck. The issue is, the model absorbs everything in the training mix. This includes old releases, mixed brands, and inconsistent phrasing, which means you end up with contextual contamination. That’s when the model starts making confident ch

Updated on February 11, 2026·Mia Comic Term base best practices: How to build a living terminology system

Most term bases fail because they live somewhere where nobody works. A spreadsheet gets created, a few people bookmark it, and then the real work happens in the editor, in Slack, in Figma, and in whatever AI tool is generating the next draft. That gap is expensive. Terminology drifts, reviewers rewrite the same phrases, and “small” naming mistakes turn into brand inconsistency in translation, SEO issues, and support tickets that shouldn’t exist. This guide covers term base best pr

Updated on February 10, 2026·Mia Comic How to automate context management in translation

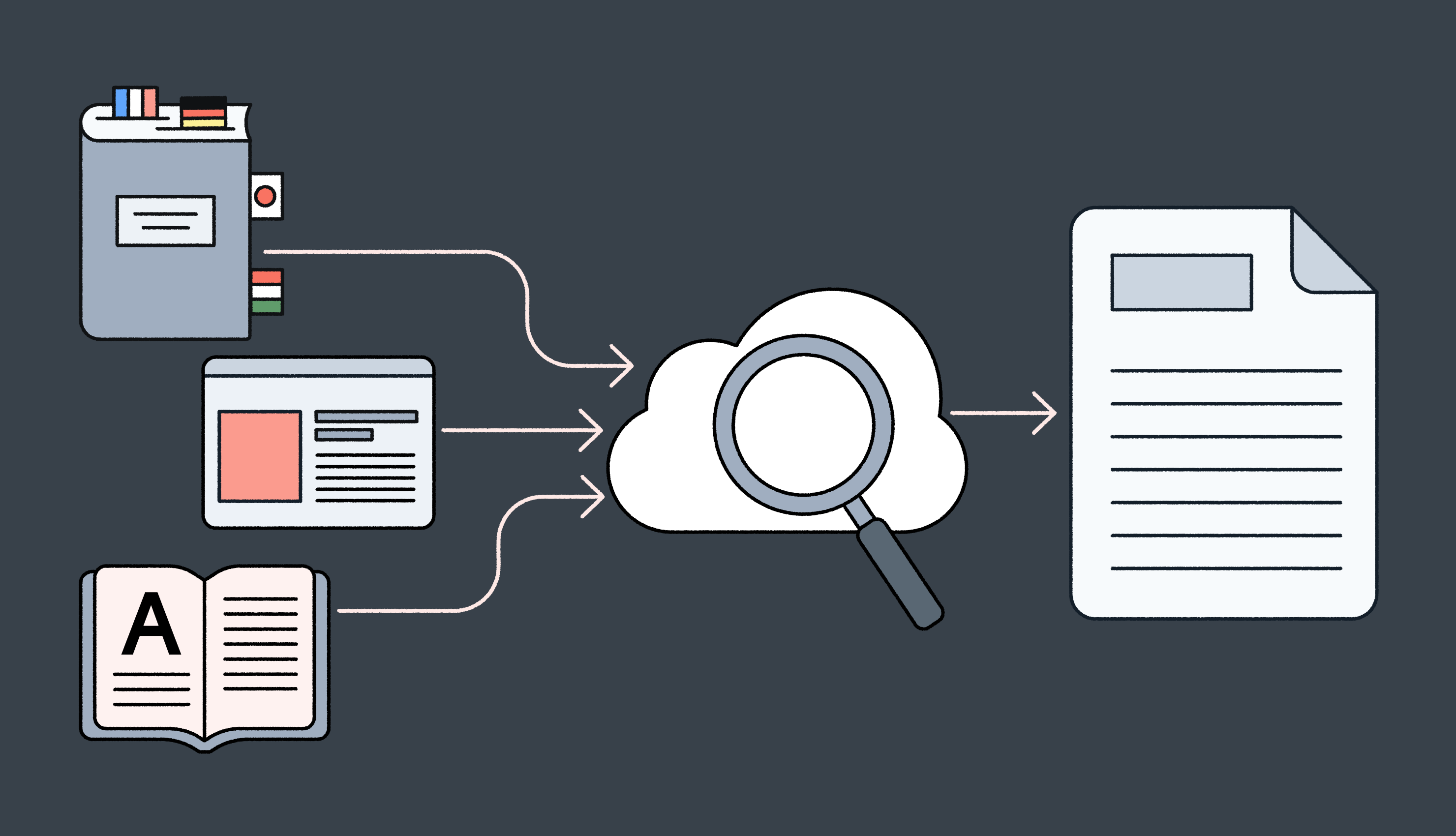

Most localization teams struggle with finding the right context. Translators jump between the TMS, Jira, Figma, Slack, and old docs just to understand a single string. Reviewers approve copy without ever seeing the screen it lives on. Developers spend hours every week explaining where text appears and what it must not break. All of this context switching in localization slows releases and drives up translation rework. On paper, it looks like “bad translation.” In reality, it

Updated on February 3, 2026·Mia Comic How AI is changing design-stage localization

Designers work in Figma. Developers work in GitHub. Localization teams work in localization software. Each team operates in isolation, creating context-switching delays that slow down your launch timelines. Design-stage localization changes this equation bringing translation into the design phase. And with AI integrated into your setup, this process can become even faster. Instead of waiting days for translations, designers get AI-translated strings in minu

Updated on January 29, 2026·Shreelekha Singh